-

Posts

56 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Blogs

Everything posted by Dan Scholes

-

The PDS Geosciences Node will be hosting three peripheral events during LPSC 2024 March 11-15, 2024 The Woodlands Waterway Marriott Hotel and Convention Center The Woodlands, TX PDS Geosciences Node Updates for Data Providers and Users (Tuesday 3 PM) Join the PDS Geosciences Node as we cover the latest on data archiving, preservation, and distribution. We will touch on the Node’s support for data providers and the planetary science user community and will share what is happening with the Node online tools and services. The session will be in the Creekside Park meeting space Tuesday at 3 PM. PDS Geosciences Node: Office Hours for Questions & Feedback (Wednesday 10 AM) Meet members of PDS Geosciences Node, have your PDS questions answered, learn about submitting data to the PDS, explore and walk through using the Node’s online data search and retrieval tools, and provide the Node with your feedback. We are here to help! The session will be in the Panther’s Creek meeting space Wednesday at 10 AM. PDS Geosciences Node Updates for Data Providers and Users (Wednesday 3 PM) Join the PDS Geosciences Node as we cover the latest on data archiving, preservation, and distribution. We will touch on the Node’s support for data providers and the planetary science user community and will share what is happening with the Node online tools and services. The session will be in the Creekside Park meeting space Wednesday at 3 PM. Stop by our LPSC posters: Thomas C. Stein and Feng Zhou, Updates to the PDS Analyst's Notebook (#1255) June Wang et al., Latest Updates to the PDS Geosciences Node’s Orbital Data Explorer (#1406) J. Ward et al., NASA PDS Geosciences Node Status Update (#1453)

-

- meetings

- pds geosciences node

-

(and 1 more)

Tagged with:

-

The PDS Geosciences Node has a new a quick start user guide for viewing PDS images and exporting the images to other formats. The guide is intended to help new users learn how to use free tools that are available with basic viewing and format transformations of PDS images. Document Link (PDF): Viewing PDS Images and Exporting to Other Formats

-

- pds

- image viewing

-

(and 1 more)

Tagged with:

-

The ODE REST interface has been updated to version 2.1.5. Update Summary: Added the ability to filter product count and product queries by PDS4 Product LID, Bundle LID, and Collection LID. Changed observation time and product creation time filters to no longer use wildcards. This change will have minimal impact on users, and only affects queries with one date time value provided with a wildcard. Now any minimum value will be used as a true minimum and the same for maximum values that are provided. This update aligns with recent changes to the ODE website. The updated help document is found here: https://oderest.rsl.wustl.edu/ODE_REST_V2.1.5.pdf For questions, contact us at oderest@wunder.wustl.edu.

-

Direct URL links to files hosted by the PDS Cartography and Imagine Sciences Node have been updated in the ODE database. Old path to archives: https://pds-imaging.jpl.nasa.gov/data/ New path to archives: https://planetarydata.jpl.nasa.gov/img/data/ Links to the previous URL will redirect to the new path, but some users' software was having issues with following the redirects. It will take some time for the links to be updated in our coverage KML and Shapefiles, as well as the help documentation. The automatic redirects should handle most user needs, in the meantime. Please let us know if you need any assistance.

-

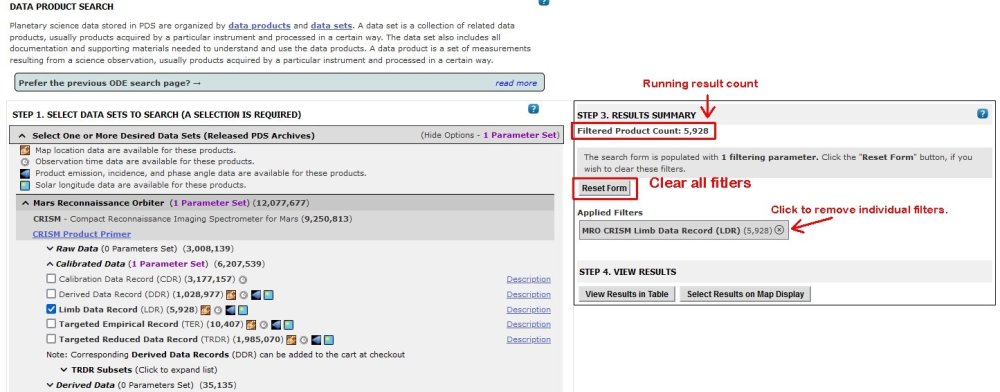

The ODE product search update that uses faceted searching is now live on the primary website. The search result count is updated as a user proceeds through applying filters. The applied filters can be removed with the "Reset Form" button, which is now visible on the right side of the screen. Individual filters can be removed by clicking each applied filter on the right side of the screen. Mars ODE Product Search Mercury ODE Product Search Lunar ODE Product Search Venus ODE Product Search Contact the ODE development team directly at ode@wunder.wustl.edu if you have questions or encounter any issues with the website. Thanks!

-

- ode update

- product search

-

(and 2 more)

Tagged with:

-

Minor back-end code updates have been made to the ODE Diviner RDR Query tool and the ODE LOLA RDR Query tool. For the Diviner Query tool, records with invalid calibrated temperature brightness (indicated with a -9999 value) will no longer be output by the query tool. This update is to align with the recent data releases that includes more of these values. See this page for more about the temperature brightness issue: https://pds-geosciences.wustl.edu/missions/lro/diviner.htm In addition, the output query performance has been slightly improved. Please contact us ode@wunder.wustl.edu with any questions.

-

- lro lola

- lro diviner

-

(and 1 more)

Tagged with:

-

ODE's Granular Data System (GDS) queuing and processing services have been moved to a faster processing server. Users may notice performance improvements on requests to the ODE GDS system. The GDS system allows users to request aggregate subsets of data points from select product types with long data track observations. The system supports MOLA PEDR, LOLA RDR, Diviner RDR, and MLA Geolocated Science Query product types. The system update applies to direct queries to these services through the ODE GDS REST interface, as well. https://oderest.rsl.wustl.edu/GDSWeb/ Please send questions or report any issues to ode@wunder.wustl.edu.

-

The ODE Beta of the new faceted product search is available for user testing and review. https://ode.rsl.wustl.edu/beta Features include data set counts, and date/time and angle product counts and ranges based on the current criteria. The results summary and view results section to the right side of the product search screen allows users to quickly view applied filters, total result count, and results summary. The section also includes buttons to view the search results in a table or on a map. The ODE development team is interested in your feedback. Direct questions and feedback to ode@wunder.wustl.edu.

-

aspera Is there a way to utilize Aspera with a direct download?

Dan Scholes replied to Nathan Weinstein's topic in For data users

Hi Nathan, Thanks for posting the question. Email me at scholes@wustl.edu, and we can look at some options to help you download from our archive. Thanks, Dan -

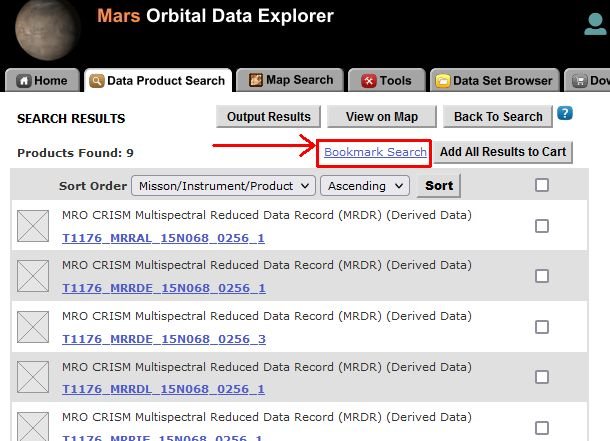

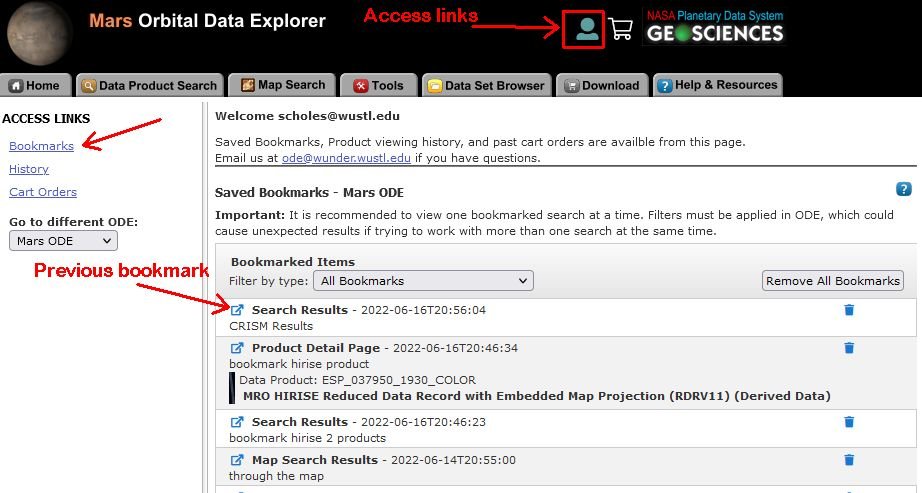

An optional user login has been added to ODE. It's free and simple to use! Through this feature, users can save product searches, map searches, and product details pages! Past cart history and viewed product detail pages are accessible, as well. Click the anonymous user icon in the ODE banner to create an account or sign in. Additional user account information is found in the ODE help or email us at ode@wunder.wustl.edu with questions. Links to ODE versions Lunar ODE Mars ODE Mercury ODE Venus ODE For a quick example, we will display saving a product search result list as a bookmark. Example of Saving a product search result list (must be logged in): Click the "Bookmark Search" link. Then enter an optional description and save the bookmark. Saved bookmarks are accessed from the user icon menu in the ODE banner. From that location, bookmarks, history, and previous cart orders can be accessed. Here we are listing the saved bookmarks. Click a bookmark to open the saved location to a new browser tab.

-

- ode

- new feature

-

(and 1 more)

Tagged with:

-

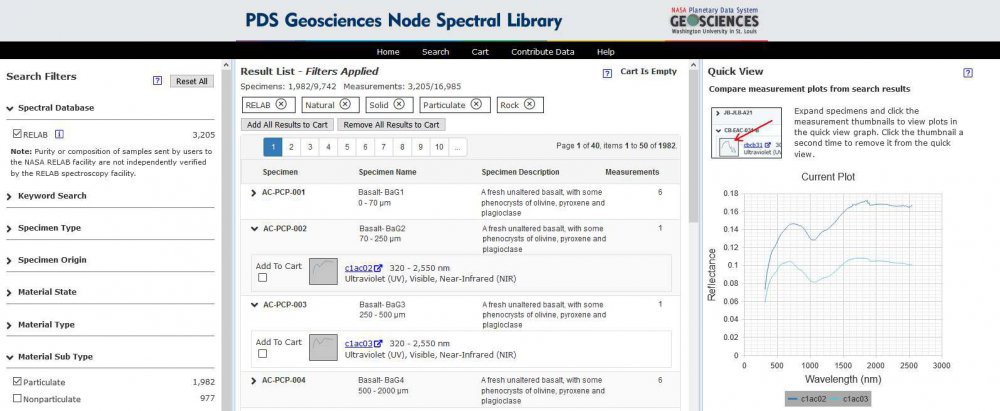

The PDS Geosciences Node Spectral Library has been updated to include a recent RELAB spectral data delivery to the existing RELAB spectral library data bundle. https://pds-geosciences.wustl.edu/speclib/urn-nasa-pds-relab/ The additions include 1,436 new specimens and 3,483 new measurements.

-

A periodic RELAB spectral data delivery has been added to the existing RELAB spectral library data bundle. https://pds-geosciences.wustl.edu/speclib/urn-nasa-pds-relab/ The additions include 1,436 new specimens and 3,483 new measurements. The new measurements have been added to the PDS Geosciences Node Spectral Library.

-

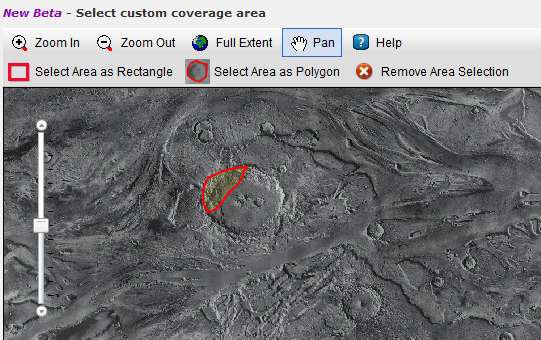

ODE's MRO Coordinated Observation Search interface has been updated to include an interactive map location selection option. Users can zoom-in to a desired search region and select a point, rectangle, or freehand polygon search area. https://ode.rsl.wustl.edu/mars/indextools.aspx

-

- mro

- coordinated observation

-

(and 3 more)

Tagged with:

-

Release 54 from the Mars Reconnaissance Orbiter mission includes new data for CRISM, SHARAD, and Gravity/Radio Science. The data are online at https://pds-geosciences.wustl.edu/missions/mro.

-

- mro

- release announcment

-

(and 3 more)

Tagged with:

-

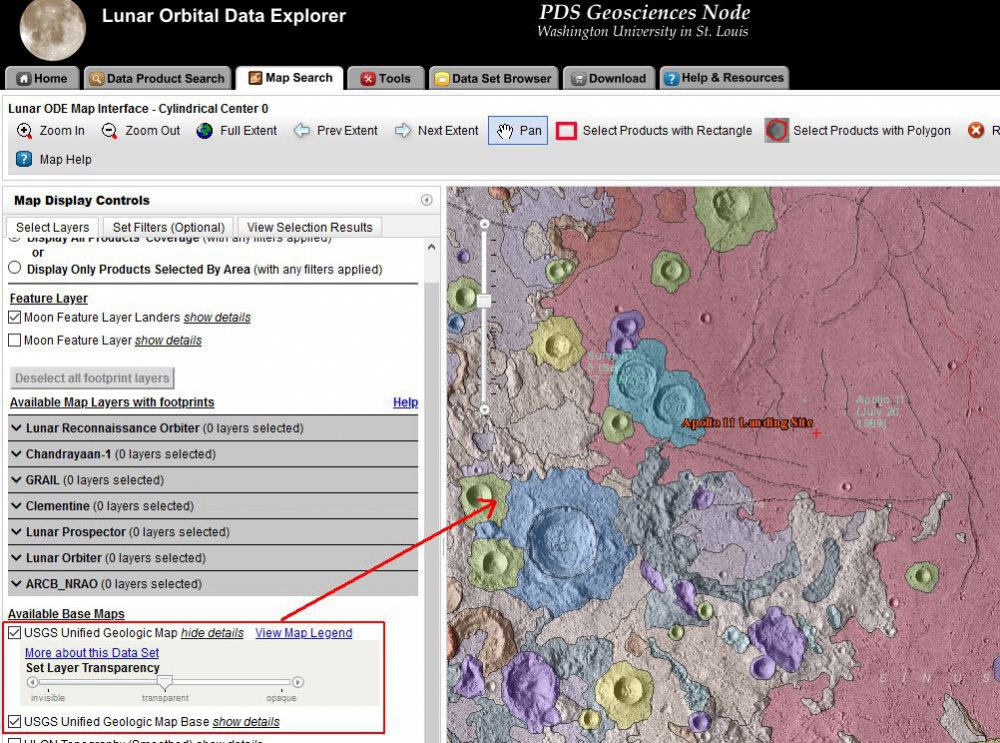

We are pleased to announce that the USGS Unified Geologic Map of the Moon is now available through the Lunar ODE map search. The map is displayed as two map layers in ODE. 1. The first layer contains unit contacts, geologic unit polygons, linear features, and unit and feature nomenclature annotation. 2. The second layer, which we call the base layer is a shaded-relief product derived from SELENE Kaguya terrain camera stereo (equatorial, ~60 m/pix) and LOLA altimetry (north and south polar, 100 m/pix).

-

We have released a new version of our MakeLabels tool. The new 6.2 version includes additional features to help with the creation of PDS labels. The program and documentation can be found on the PDS Geosciences Node's MakeLabels page. Version 6.2 updates include: The output label destination directory is created, if it does not already exist and the user has appropriate permissions. Any template label tags that are not replaced (due to syntax errors or missing columns in the spreadsheet) are listed in the post-processing summary report. The MakeLabel's GUI interface now has a sizeable report window. The report summary has been moved to the end of the processing output report. The option to upper or lower case fields from the source spreadsheet has been added to the template tags. A new template tag allows a single line to be hidden if it's corresponding field is empty in the spreadsheet. (example: <name><!-- |specimen_id| OR HIDE-THIS-LINE --></name>) Show if and hide if template tags no longer need to be left aligned in the template. These tags can be indented and spaced with the surrounding XML.

-

We are pleased to announce the release of the PDS Geosciences Node Spectral Library website. See the release announcement under the main forum announcements. Feel free to ask questions and provide feature requests on this forum. Thanks!

-

- spectral library

- pds

-

(and 2 more)

Tagged with:

-

We are pleased to announce the release of the new PDS Geosciences Node Spectral Library website. The PDS Geosciences Node Spectral Library is a database of laboratory spectra submitted by various data providers. It currently includes spectra from the Reflectance Experiment Laboratory (RELAB) at Brown University. Additional data sets will be added to the website in the coming months. The website allows users to search the catalog of specimen and measurements using a facet search. Results can be viewed in quick view summary or full detail pages. Measurement data can be downloaded individually or through a cart system.

-

- laboratory spectra

- relab

-

(and 1 more)

Tagged with:

-

Hi Christy, I just tried the Windows version of the software on my Windows 10 machine. It would not run properly, either. I think we can assume the software has not kept up with the latest operating systems. I do have a couple of alternative options. I have confirmed that the files can be viewed with the PDS4 viewer: https://sbnwiki.astro.umd.edu/wiki/PDS4_Viewer Using this tool, the data files can be exported as csv, tab delimited, and additional formats. Keep in mind that the archive you mentioned is both PDS3 and PD4 compatible. To use PDS3 tools, use the .lbl label, while PDS4 tools will use the .xml label. The XML label will be needed for the PDS4 Viewer. You could also write a script with IDL, MATLAB, Python, or another programming language to parse the data files. The column specifications are found in the label files. The PDS3 label file refers to format files which are found in the archive's label directory (https://pds-geosciences.wustl.edu/messenger/mess-e_v_h-grns-3-grs-cdr-v1/messgrs_2001/label/). The product you mentioned refers to https://pds-geosciences.wustl.edu/messenger/mess-e_v_h-grns-3-grs-cdr-v1/messgrs_2001/label/grs_cal_sh3.fmt. The PDS4 label contains all of the column definitions. Let me know if you have additional questions. Best wishes, Dan

-

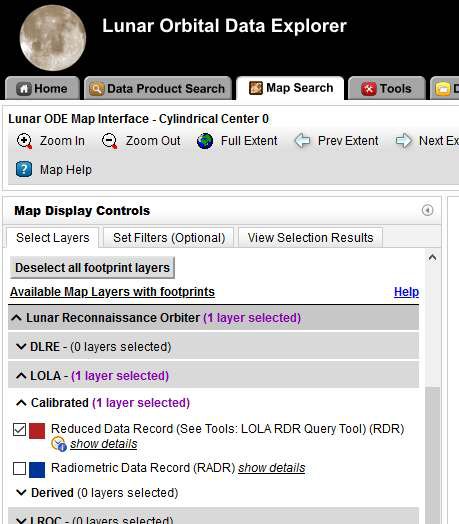

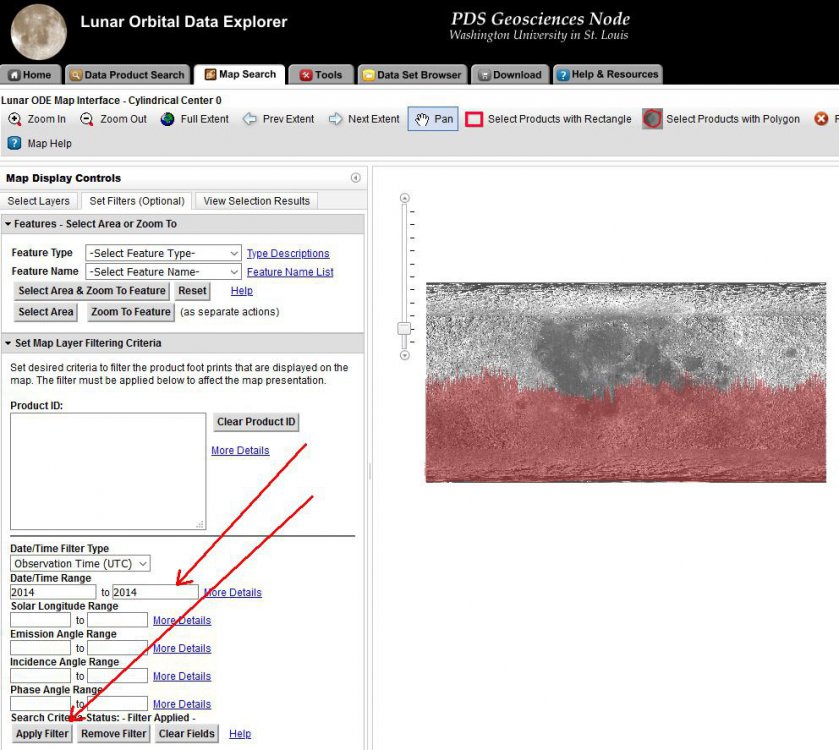

Hi There, I am one of the developers who maintains the ODE website. I ran the same query in the ODE LOLA RDR Query tool, and I received the same response. I believe it is reasonable. Remember, you are just limiting the output to one day. The tool is not setup very well for global coverage searches, but it can be done for narrow time windows. If you wish to query 2014-2019, I would suggest doing a region of interest. That is more of the objective of the query tool. The limited areas of coverage for 2014 and later is expected. The orbiter was in a different orbit and the instrument issues you mentioned. To see the different in coverage for later years, you can use the ODE map search page to filter LRO LOLA RDR coverage to specific time ranges. Link to map search interface: https://ode.rsl.wustl.edu/moon/indexMapSearch.aspx Map interface limited to LOLA RDR (image mapSearch1.jpg) LOLA RDR filtered to 2014 observations. (image mapSearch2.jpg) Thanks, Dan

-

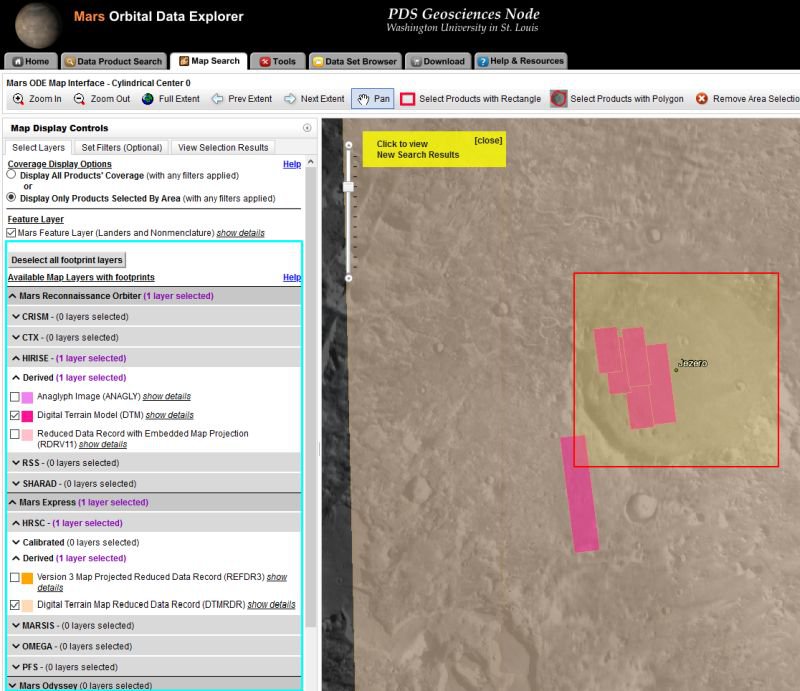

The ODE map search has been updated to have product coverage map layers grouped by mission/instrument/processing level. This map layer organization matches the product search page. Mars Orbital Data Explorer Map Search Lunar Orbital Data Explorer Map Search Mercury Orbital Data Explorer Map Search Venus Orbital Data Explorer Map Search Mars ODE Map Search Example:

-

- mars map search

- lunar map search

-

(and 3 more)

Tagged with:

-

The PDS Geosciences Node and the LROC Data Node have established a faster data transfer method. As a result, ODE cart downloads of LROC data will be fulfilled noticeably faster than in the past. We encourage users to give it a try through the Lunar ODE website.

-

New functionality has been added to the ODE product search page to allow users to select a freehand polygon location for coverage searches. Similar freehand polygon search filtering has been added to the map search page. Mars ODE Product Search - https://ode.rsl.wustl.edu/mars/indexProductSearch.aspx Mercury ODE Product Search - https://ode.rsl.wustl.edu/mercury/indexProductSearch.aspx Lunar ODE Product Search - https://ode.rsl.wustl.edu/moon/indexProductSearch.aspx Venus ODE Product Search - https://ode.rsl.wustl.edu/venus/indexProductSearch.aspx

-

Hi Raj Patel, Thank you for contacting us about your question. First, I confirmed there are no .QUB files in the THEMIS IRBTR (Infrared Brightness Temperature Record) data set. Newer versions of GDAL support the conversion of a .QUB file type into GeoTiff (.tif). Here is an example of the command: Gdal_translate -of GTiff D:\test\data\I00818001RDR.QUB D:\test\data\I00818001RDR.tif The first link on the following FAQ describes the tools ASU's Mars Space Flight Facility recommends for opening THEMIS images. http://viewer.mars.asu.edu/faq#t6n18 Let me know if you have further questions. Best wishes, Dan

- 2 replies

-

- ENVI

- Map Projection

-

(and 2 more)

Tagged with:

-

Hi Deepak, Thank you for the valuable suggestions and feedback. Our staff will be discussing these suggestions over the next couple of weeks to determine our current capabilities and prioritizing future ODE updates. I will be able to provide you with more details after these discussions occur. Also, we will have a booth at LPSC where your presentation ideas can be discussed. Please stop by the PDS booth during LPSC. Best wishes, Dan

- 2 replies

-

- pds data query

- community connect

-

(and 1 more)

Tagged with: